|

Peano

|

|

Peano

|

ADER-DG conceptually consists only of three steps: prediction, Riemann solve and correction. While this is conceptually simple, challenges arise once we consider dynamic AMR or local time stepping. This section discusses different ADER-DG implementation flavours. The flavours differ in the way they map the three core steps onto mesh traversals.

It is important to reiterate the data dependencies and to introduce some intermediate steps which are required to couple the core algorithmic steps:

To discuss the temporal logic of these steps, it is important to reiterate that Peano runs through the underlying spacetree in a top-down fashion and users can only plug into vertex, face and cell events. That is, we can never access the adjacent cells of a cell or a face, and we have no control over the order of the traversal. We only know that it follows these top-down/bottom-up constraints.

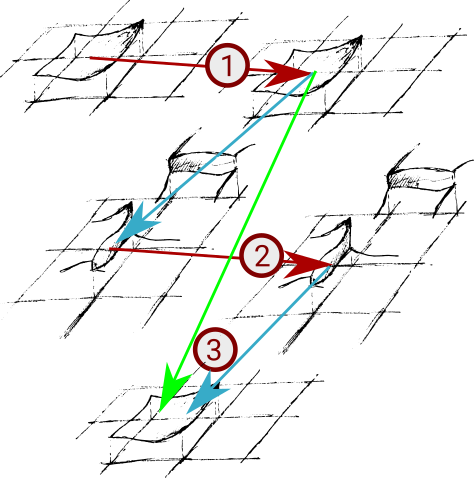

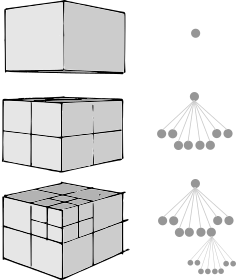

As we distinguish plug-in points for cells from plug-in points (events tiggering actions) for faces, we can map a vanilla version of one ADER-DG step onto two mesh traversals:

touchCellFirstTime(): Compute the predictor and project the predicted solution onto the face. This could alternatively also happen within touchCellLastTime(). As we usually work on the finest level, the last touch - which is the action when the tree traversal automaton backtracks - happens immediately after the first touch. In our code, the projection to the face is directly integrated with the predictor. This allows us not to have to store the entire space-time solution and to reuse the flux computations in the predictor for the projections, which means we both don't need to recompute the fluxes on the projected values and have more exact approximations for the fluxes on the face.touchFaceLastTime(): Send out the projected data to neighbouring trees if we employ domain decomposition. This happens automatically and is championed by Peano's core. The step works with consolidated data, as touchFaceLastTime() happens after touchCellLastTime().touchFaceFirstTime(), we merge incoming data from other tree partitions. From hereon, we have a valid, consistent view of the predicated data on a face from the left and right side. This step is championed by Peano's core.touchFaceFirstTime(): Solve the Riemann problem and store the outcome within the face.touchCellFirstTime(): We know that touchFaceFirstTime() has already been called for the 2d adjacent faces of the cell. Therefore, we can now correct the solution. This step also could be realised within touchCellLastTime().For a serial code, we could solve the Riemann problem straightaway in touchFaceLastTime(). touchFaceLastTime() is invoked after touchCellLastTime() (see Peano's generic description of the order of events over action sets) has been called for both adjacent cells. While this is an appealing idea, as it means that we could throw away the projected data and keep only the Riemann solve result, this would break down in a parallel setup, where each subdomain has to send out its contribution first and we then have to merge these partial data sets prior to the next grid sweep.

In the context of adaptive meshes, it is important to take into account that

This is reflected by the order of the events. Therefore, whenever we project a solution onto a hanging face in step (1), this information will be lost after this mesh traversal. We have to save it by moving the information from the face to the next coarser level.

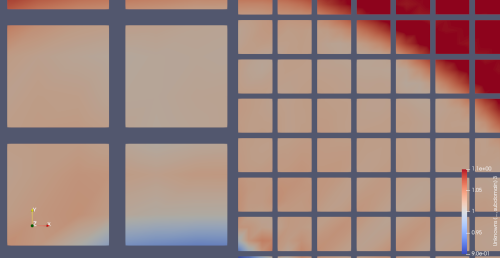

A straightforward implementation of ADER-DG for adaptive meshes hence commits to a solve Riemann problem only on persistent faces and project outcome onto hanging faces policy:

TODO: This documentation is now outdated with the introduction of persistent hanging grid data

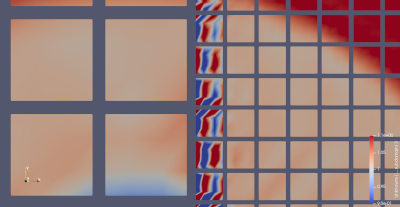

Unfortunately, it seems that some solvers suffer from inaccurate Riemann solutions that arise from the interpolation. While we are unsure exactly what causes this our current assumption is that this is caused by one of two things: either the concatenation of the fine faces or solving the problem on the coarse face causes information to move too quickly, therefore forming important gradients that lead to instabilities.

If we want to solve the Riemann problem on the fine (hanging) faces, we have to be very careful:

Therefore, a fine grid solve is only possible if we modify our algorithm blueprint:

There is a price to pay for this realisation: Some predictions are done twice (along the AMR boundary) and we traverse the mesh thrice rather than twice. The latter is mitigated by the fact that we can work with smart pointers for the actual data, i.e. not that much data is shoveled through the memory subsystem, but it is a severe overhead. The former can likely be optimized by recognizing whether we are adjacent to a hanging face, which is not yet available. Given that the predictor is by far the most expensive operation in ADER-DG, this optimization should be quite a priority. Nevertheless, it makes sense to maintain both ADER-DG realisation variants: One working with two sweeps and one working with three sweeps.